Replayable runtime forensics for AI agents.

AISecOps reconstructs execution history from structured runtime events: execution plans, policy evaluations, provenance chains, approval flows, execution paths, and final governance outcomes.

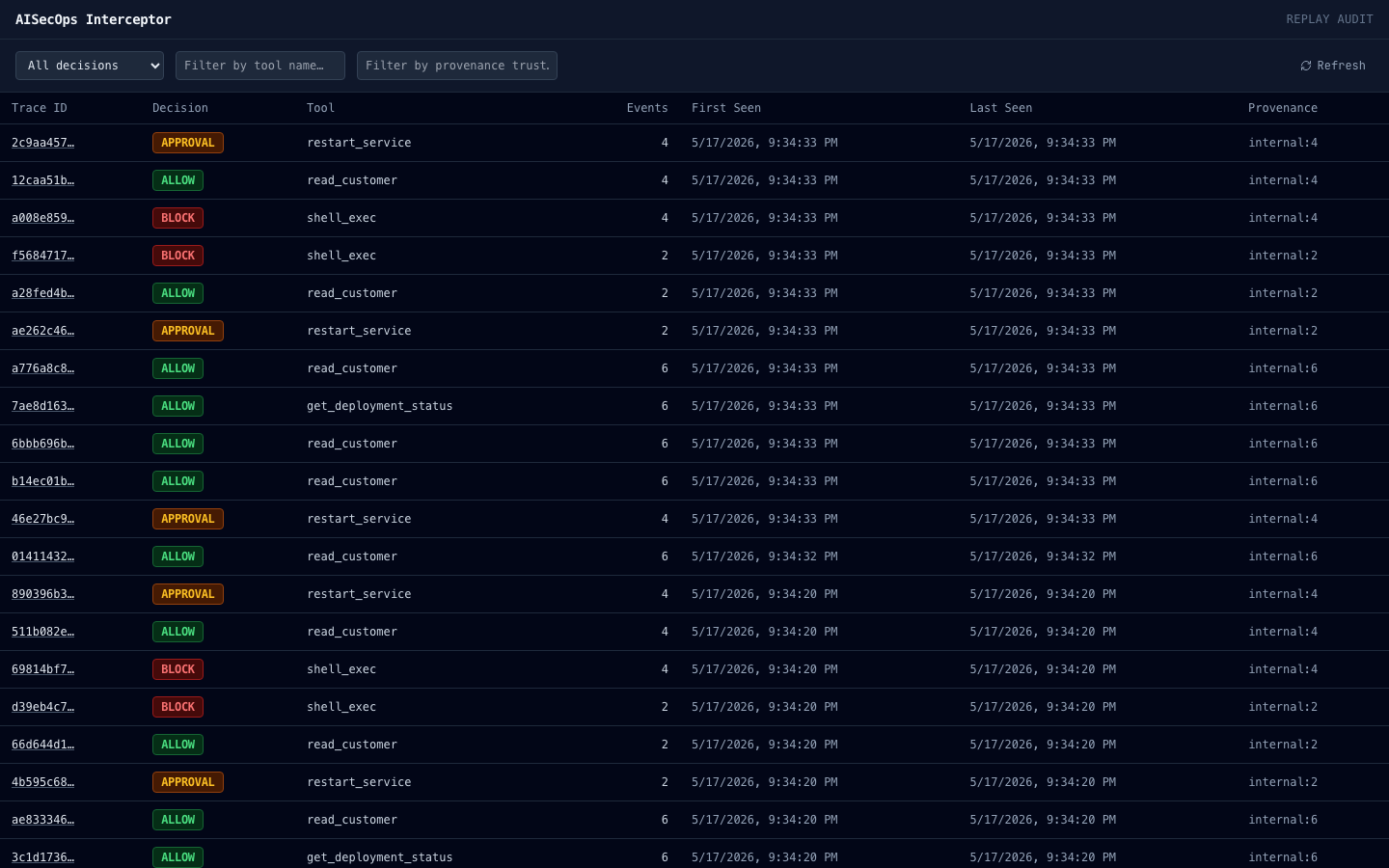

Investigate runtime decisions across traces.

Replay summaries expose runtime decisions, provenance trust levels, event counts, and execution outcomes for forensic investigation.

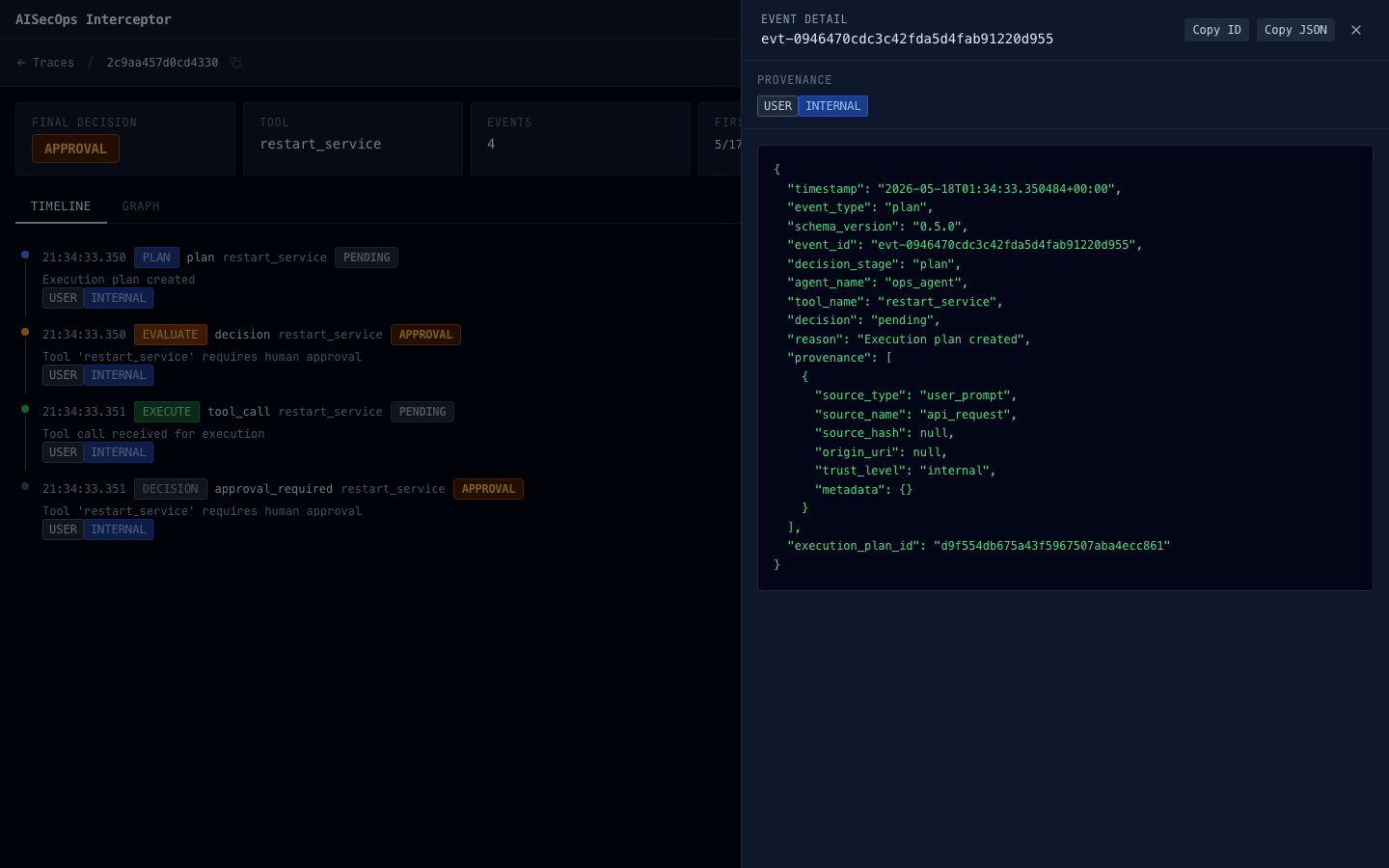

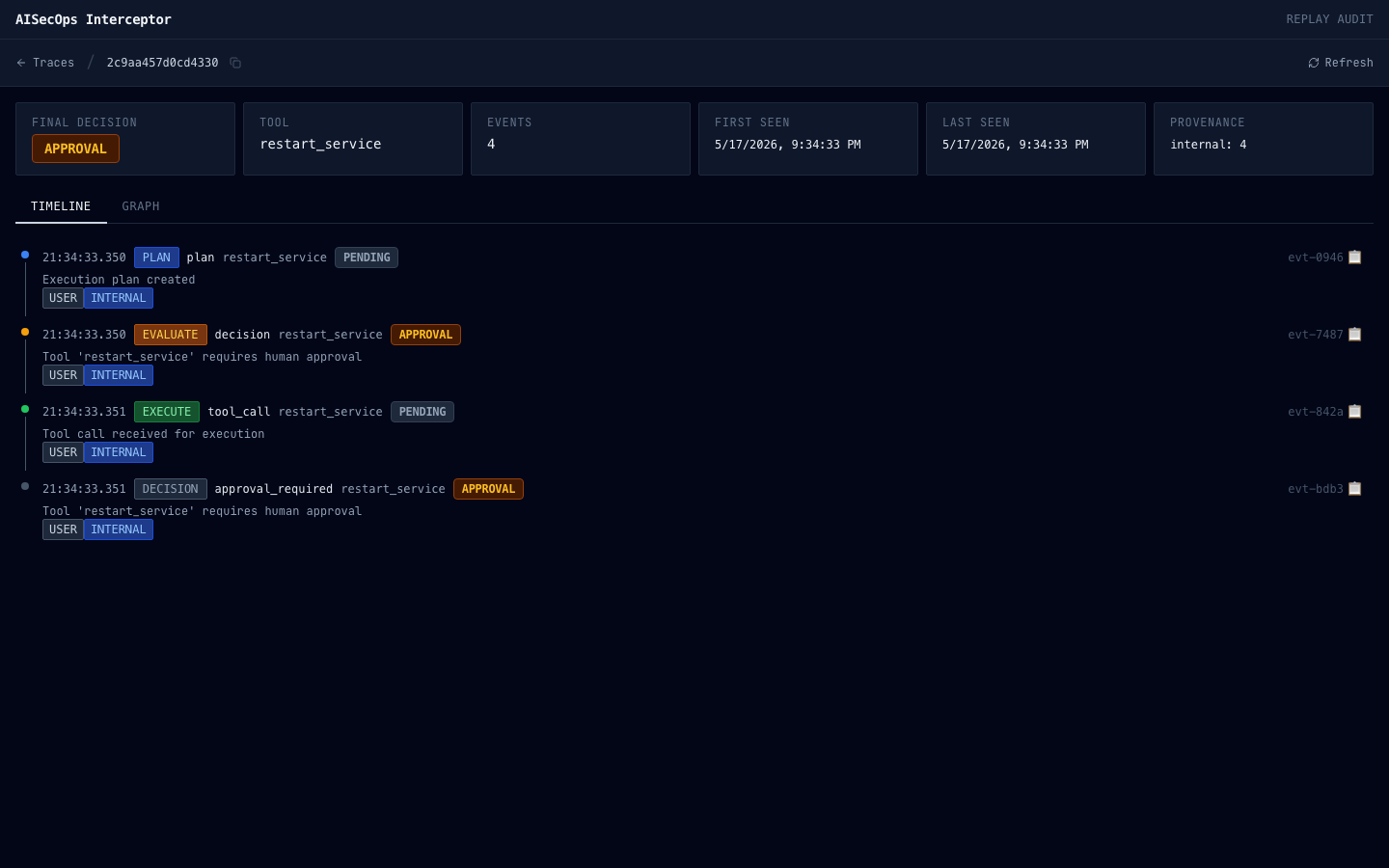

Replay execution history step-by-step.

Structured replay timelines reconstruct planning, evaluation, approval, execution, and final governance decisions in execution order.

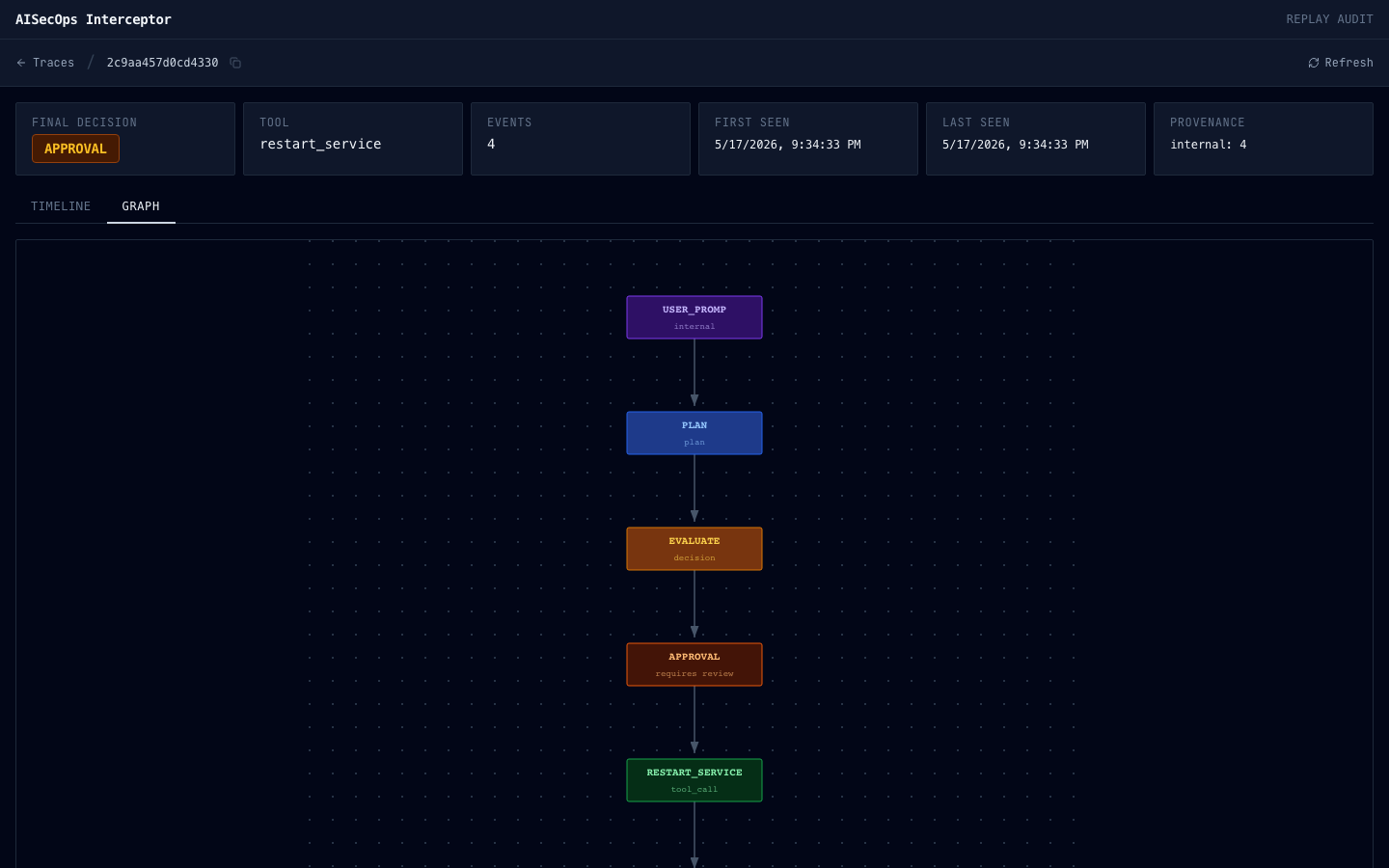

Visualize provenance-aware execution flow.

Execution graphs reconstruct causal runtime relationships between provenance sources, planning stages, policy evaluation, approvals, tool execution, and final outcomes.